Today we’re going to take a look at how asynchronous compute can help you to get the maximum out of a GPU. I’ll be explaining the details based on the nBodyGravity sample from Microsoft – but let’s start with some background first!

Async what?

Asynchronous compute is a new concept on GPUs, but one you are probably very familiar with on CPUs where it’s usually called SMT – simultaneous multithreading. What does this actually mean? In general, your CPU has more execution units than you can use with a single thread. For instance, a CPU may be able to execute 4 operations per clock cycle, but if your thread is waiting for a memory access to finish, the execution units are left idle. By issuing instructions from a second thread, there’s a good chance to fill up those bubbles and get higher throughput.

A GPU is very similar in this regard. You have different units like the rasterizer, the compute cores and the blending units , and each of them can become a bottleneck for a given draw call. GPUs have a much deeper pipeline than CPUs to avoid this from happening, but there will still be situations where some units will be idle. A good example is shadow map rendering, which is usually very heavy on the rasterizer or triangle throughput, but leaves most compute units idle.

With Direct3D® 12 and Vulkan™, developers get a tool to express which computations are allowed to happen in parallel – the graphics & compute queues. The graphics queue can use all execution resources – copy engines, compute cores, the rasterizer, and so on, while the compute queues can only use the compute cores. By scheduling tasks on a queue, the developer makes it clear to the GPU scheduler that those can be executed independently and potentially concurrently with tasks on other queues.

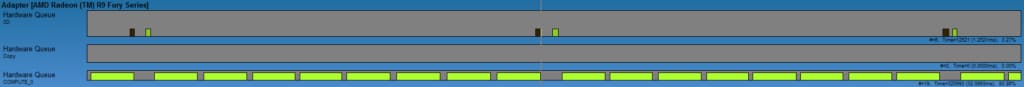

Synchronization between queues has to be done manually using fences. The default version of the nBodyGravity demo does this for example to periodically render the simulation results. Every few simulation steps, it will synchronize the compute queue with the graphics queue, render the results, and once the results have been displayed, continue simulation. While the GPU is rendering, the compute queue is left idle. We can see this in the following GPUView trace:

We can see the compute queue execute multiple packets, then pauses while the graphics queue is busy, and then resumes work. This results in the GPU being busy only 85% of the total time, so we’re wasting a lot of performance here. Let’s see how we can restructure the code to let the graphics and compute execute concurrently.

Maxing out the GPU

So how can we improve this? In my modified nBodyGravity sample, the key change I did is to double-buffer the simulation. That is, the simulation of the next frame is running while the current frame is being rendered. As long as the render time is significantly lower than the simulation time, this will result in the compute queue being busy simulating all the time, and the graphics queue rendering results as soon as they are ready. The synchronization is still similar to the original sample – a fence is used to trigger the rendering once the simulation is done – but the main difference is that the simulation data is copied into “render memory” before that. The next simulation step is then enqueued immediately, with a fence on the previous frame’s rendering job. The fence is needed to ensure that the simulation doesn’t get too far ahead. With these small changes, we end up with the following behavior:

- Simulation for frame N executes on the compute queue

- Signal for rendering of frame N

- Concurrently:

- The simulation of frame N+1 is started on the compute queue

- Rendering of frame N executes on the graphics queue

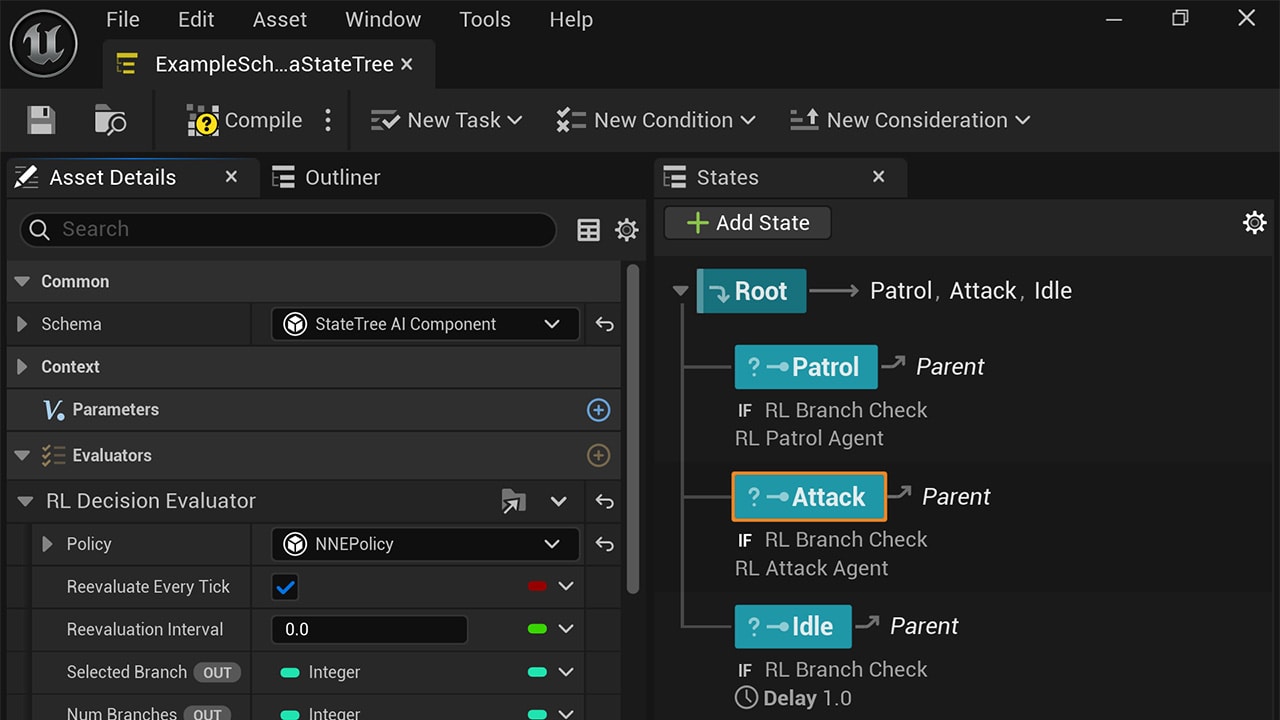

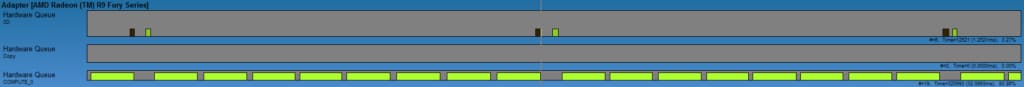

We can verify this in GPUView:

In the trace above, we can see that the graphics queue is busy around 20% of the time while the compute queue is used 100% of the time. Overall, we have the GPU busy “125%” of the time – as we have more than just one queue busy. We’re using the rasterizer and the blending units for rendering (which are idle during the simulation time). This allows us to keep more units of the GPU busy and improve performance over sequential execution, where the compute units are mostly idle while the particles are rendered. You can verify this yourself by setting AsynchronousComputeEnabled = false, which will remove the synchronization altogether and submit everything sequentially on the graphics queue. On an AMD Radeon Fury X, the performance without asynchronous compute enabled is approximately 8 ms per frame. With asynchronous compute, we get this down to 7 ms – a 15% improvement. While nothing to sneeze at, this somewhat limited gain is due to the fact that the graphics workload also requires some compute. As we’re getting a 15% improvement on 25% of the overlap, we can estimate that roughly half of the graphics workload is actually compute. Much higher gains can be obtained when overlapping tasks with widely different characteristics. For example running an ambient occlusion kernel while shadow maps are rendered can end up being completely free, as they stress different execution resources.

That’s it for this sample! You can find the source code on GitHub so you can see how exactly the double-buffering and the synchronization has been changed.

Sample