HIP Ray Tracing

HIP RT is a ray tracing library for HIP, making it easy to write ray tracing applications in HIP.

Today, we released HIP RT – a new ray tracing library for HIP. HIP RT makes it easy to write a ray tracing application in HIP, with the library and API designed to be simple to use and easy to integrate into any existing HIP applications.

Although there are a few other ray tracing APIs which introduce many new aspects, we designed HIP RT in a slightly different way by eliminating the need to learn many new kernel types. HIP RT introduces new object types like hiprtGeometry and hiprtScene. Once the geometric information is passed to HIP RT, the process builds the data structure, which in turn is passed to the HIP kernel. At this stage, the device-side library API can be used to perform an intersection test.

Current generation graphics cards, such as AMD RDNA™ 2 architecture-based GPUs, support hardware ray tracing acceleration to further optimize render times. However, up until now, applications that support HIP have not been able to utilize this hardware acceleration. HIP RT is designed to allow developers to take full advantage of the Ray Accelerators used for hardware ray tracing in AMD RDNA 2 architecture-based GPUs.

In this first release, we implemented some basic features which are required by ray tracing applications.

The triangle mesh is the basic primitive of HIP RT from which we build hiprtGeometry. In general, scenes are comprised of many meshes. Developers can utilize hiprtScene support on top of these meshes to create an acceleration structure which can be used to search for a mesh efficiently when a ray is intersecting many meshes. This process allows developers to find the closest hit of a ray or capture all hits. This second case is useful for shadow and transparency.

In order to accelerate the ray tracing operation, HIP RT builds an acceleration structure in the library. There is no single structure to fit all cases, therefore, we provide several options to chose from in order to find a structure which fits your needs. For example, you can choose a high quality build which takes a little longer to produce than others, but the result is a high quality data structure which makes single ray tracing queries faster. Alternatively, advanced ray tracing users may choose to use HIP RT to load a self-built bounding volume hierarchy (BVH), HIP RT can then be used to perform the intersection on the GPU. As of today, this is a unique feature of HIP RT that no other ray tracing APIs support. For example, HIP RT can be used to build a high quality BVH for static geometry in a scene and then load it at runtime.

Our acceleration builder not only takes triangles from a triangle mesh, but also axis-aligned bounding boxes (AABBs). This process is useful if objects are dynamically changing as the change logic is executed on the GPU. An example of this is skinning. As a buffer is passed with AABBs, the data never leaves the GPU. The user simply needs to execute the geometry update logic on the GPU, write the updated AABBs to a buffer, then pass it to our builder.

If your scene contains primitives other than triangles, HIP RT can handle them too. All you need to do is provide a custom intersection function for the geometry, register the function in HIP RT, then this function will be called when a ray intersects with the primitive.

Is the exposure time of your virtual camera finite? Then, you may want to try our motion blur. All you need to do is set some transforms during the exposure time. The interval of the key frames does not need to be uniform. You can pass the position of the key frame in the exposure time.

Now that you’ve got an idea of the features of HIP RT let’s look at the API.

Here are some basic examples, but more detail is available in the tutorial.

After you create a HIP context and device, you pass them to hiprtCreateContext to create a HIP RT context. You can find the code at 00_context_creation.

Once you have created hiprtContext, the next step is to build hiprtGeometry which is the data structure we need to execute ray intersection on the device-side. The steps to create hiprtGeometry are as follows:

hiprtTriangleMeshPrimitive mesh;//set up the mesh//get the temporary buffer sizehiprtGetGeometryBuildTemporaryBufferSize( ... );//create hiprtGeometryhiprtGeometry geom;hiprtCreateGeometry( ... );//build hiprtGeometryhiprtBuildGeometry( ... );You first create a hiprtTriangleMeshPrimitive object, then create a hiprtGeomety by calling hiprtCreateGeometry() which can be built in hiprtBuildGeometry(). You can find a sample code in 01_geom_intersection.

The code above is used to start the process of ray casting on the device-side, so now let’s move to the device code. Our first kernel can be very simple:

#include <hiprt/hiprt_device.h>

extern "C"__global__ void MeshIntersectionKernel(hiprtGeometry geom, unsigned char* gDst, int2 cRes){ const int gIdx = blockIdx.x * blockDim.x + threadIdx.x; const int gIdy = blockIdx.y * blockDim.y + threadIdx.y;

hiprtRay ray; ray.origin = { gIdx / (float)cRes.x, gIdy / (float)cRes.y, -1.0f}; ray.direction = { 0.0f, 0.0f, 1.0f}; ray.maxT = 1000.f;

hiprtGeomTraversalClosest tr(geom, ray); hiprtHit hit = tr.getNextHit(); bool hasHit = tr.hasHit();

int dstIdx = gIdx + gIdy * cRes.x; gDst[ dstIdx * 4 + 0 ] = hasHit ? ((float)gIdx / cRes.x) * 255 : 0; gDst[ dstIdx * 4 + 1 ] = hasHit ? ((float)gIdy / cRes.y) * 255 : 0; gDst[ dstIdx * 4 + 2 ] = 0; gDst[ dstIdx * 4 + 3 ] = 255;}Obviously, to use our built-in function, you need to include hiprt_device.h. You can then see that we are receiving a hiprtGeometry object which we just built on the host-side. To execute the ray scene intersection, you fill hiprtRay which is simply the ray origin and direction, then create hiprtGeomTraversalClosest, and call getNextHit() which is going to return the hit information. It’s that simple!

If there is only single triangle mesh existing in the scene, building hiprtGeometry would be sufficient. However, in most of the cases, there are many objects in the scene which we also want to consider. Therefore, we have the hiprtScene object which bundles these hiprtGeometry objects together then makes a single object which we can pass to the device-side. hiprtScene creation is as follows.

hiprtScene scene;//create hiprtScenehiprtCreateScene( ... );//build hiprtScenehiprtBuildScene( ... );Although the inputs are different from building hiprtGeometry, the structure is the same. Now we need to pass the scene information by storing it to a hiprtSceneBuildInput object which is passed to hiprtBuildScene(). Sample code can be found in 02_scene_intersection.

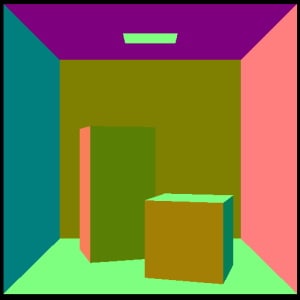

On the device-side, we can reuse the program above. All we need to do is get hiprtScene and create a hiprtSceneTraversalClosest object. You should then be able to trace a ray through the scene and render an image like this (of course our favorite one!).

Although we just showed the very basics here, you can find some interesting tutorials in our SDK, such as shared stack use for better performance, motion blur, and custom intersection functions.

In this blog post, we introduced HIP RT, a ray tracing library in HIP, and took a quick look at the API. We hope you’ll find this first release useful for your projects. You can expect improvements in HIP RT in the future, so stay tuned.

Visit our new HIP RT page to download it now: